Hyperlocal bloggers to get right to council meetings – by law

Posted: August 23, 2012 Filed under: hyperlocal, local government, openlylocal | Tags: blogging, CLG, council meetings, DCLG, hyperlocal, openlylocal 1 CommentFollowing on from our previous posts on the right to attend, report and record local council meetings, the Department for Communities and Local Government has announced that it will be changing the law to further open up council meetings.

In particular, the new regulations, which come into effect September 10, will reduce the permitted reasons for holding meetings behind closed doors and has widened the definition of media to cover “organisations that… provide news to the public by means of the internet”, thus including hyperlocal blogs.

Will Perrin at TalkAboutLocal has a good summary of some of the other issues in the announcement, but this is important news for hyperlocal bloggers, particularly those that have faced obstruction and restrictions from their local councils.

Planning Alerts: community scrapers ahoy!

Posted: May 10, 2012 Filed under: hyperlocal, local government, mapping, open data, openlylocal, planning | Tags: hyperlocal, local data, opendata, planning, Planning Applications, PlanningAlerts Leave a commentThis post is by Andrew Speakman, who’s coordinating OpenlyLocal‘s planning application work.

We can now report good progress on our plan to develop community scrapers to underpin the new incarnation of PlanningAlerts.com. The plan applies to those councils that use non-standard planning systems and our latest estimate is that there are around 100 of these sites.

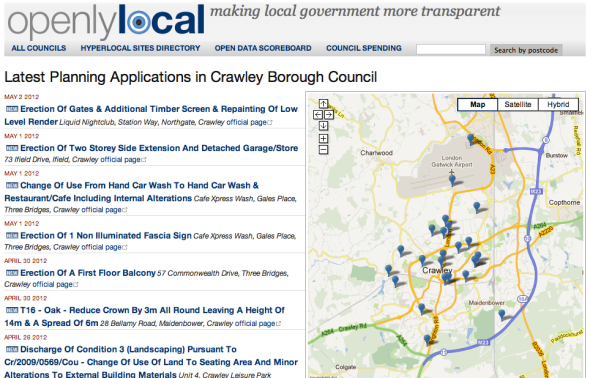

There are now 13 successful working scrapers with more on the way and some of the £75 bounties have already been paid out. The data from these scrapers is being regularly uploaded into OpenlyLocal using the Scraperwiki API, and you can see the results here with Crawley Council:

The list of the authorities being scraped by this method is as follows:

- Aberdeen

- Aberdeenshire

- Brent

- Broxbourne

- Calderdale

- Carmarthenshire

- Crawley

- East Sussex

- Herefordshire

- Isle of Wight

- Nuneaton and Bedworth

- Telford and Wrekin

- Wokingham

This is still very much a work in progress, and those in the above list that we’ve linked to are all running well and helping collect up-to-date planning applications with locations, and as soon as we turn on the alerts system (currently being tested), will start sending out email alerts.

There are also some councils that although we’re importing the data for we won’t yet be able to send alerts for – an example is Wokingham from the above list – because they do not include postcodes in the planning application details and our location coding is based on postcodes to ensure our data is fully open. If anyone from authorities such as Wokingham wants to rectify this situation, we’re more than happy to work with them.

If you want to get involved in helping scrape the UK’s planning data and building an open database of planning applications for the whole of the UK, contact me at planning@openlylocal.com. Further details about data fields and the available planning authorities are defined in this shared Google spreadsheet.

Happy scraping.

Andrew

How to help build the UK’s open planning database: writing scrapers

Posted: March 29, 2012 Filed under: hyperlocal, local government, open data, openlylocal, planning | Tags: Councils, gov2.0, hyperlocal, local data, local government, opendata, Planning Applications, PlanningAlerts 4 CommentsThis post is by Andrew Speakman, who’s coordinating OpenlyLocal’s planning application work.

As Chris wrote in his last post announcing OpenlyLocal’s progress in building an open database of planning applications, while we can do the importing from the main planning systems, if we’re really going to cover the whole country, we’re going to need the community’s help. I’m going to be coordinating this effort and so I thought it would be useful to explain how we’re going to do this (you can contact me at planning@openlylocal.com).

First, we’re going to use the excellent ScraperWiki as the main platform for writing external scrapers. It supports Python, Ruby and PHP, and has worked well for similar schemes. It also means the scraper is openly available and we can see it in action. We will then use the Scraperwiki API to upload the data regularly into OpenlyLocal.

Second, we’re going to break the job into manageable chunks by focus on target groups of councils, and just to sweeten things – as if building a national open database of planning applications wasn’t enough 😉 – we’re going to offer small bounties (£75) for successful scrapers for these councils.

We have some particular requirements designed to make the system maintainable, and do things the right way, but not many are fixed in stone, so feel free to respond with suggestions if you want to do it in a different way.

For example, the scraper should keep itself current (running on a daily basis), but also behave nicely (not putting an excessive load on Scraperwiki or the target website by trying to get too much data in one go). In addition we propose that the scrapers should operate by updating current applications on a daily basis and also make inroads into the backlog by gathering a batch of previous applications.

We have set up three example scrapers that operate in the way we expect: Brent (Ruby), Nuneaton and Bedworth (Python) and East Sussex (Python). These scrapers perform 4 operations, as follows:

- Create new database records for any new applications that have appeared on the site since the last run and store the identifiers (uid and url).

- Create new database records of a batch of missing older applications and store the identifiers (uid and url). Currently the scrapers are set up to work backwards from the earliest stored application towards a target date in the past

- Update the most current applications by collecting and saving the full application details. At the moment the scrapers update the details of all applications from the past 60 days.

- Update the full application details of a batch of older applications where the uid and url has been collected (as above) but the application details are missing. At the moment the scrapers work backwards from the earliest “empty” application towards a target date in the past

The data fields to be gathered for each planning application are defined in this shared Google spreadsheet. Not all the fields will be available on every site, but we want all those that are there.

Note the following:

- The minimal valid set of fields for an application is: ‘uid’, ‘description’, ‘address’, ‘start_date’ and ‘date_scraped’

- The ‘uid’ is the database primary key field

- All dates (except date_scraped) should be stored in ISO8601 format

- The ‘start_date’ field is set to the earliest of the ‘date_received’ or ‘date_validated’ fields, depending on which is available

- The ‘date_scraped’ field is a date/time (RFC3339) set to the current time when the full application details are updated. It should be indexed.

So how do you get started? Here’s a list of 10 non-standard authorities that you can choose from. Aberdeen, Aberdeenshire, Ashfield, Bath, Calderdale, Carmarthenshire, Consett, Crawley, Elmbridge, Flintshire. Have a look at the sites and then let me know if you want to reserve one and how long you think it will take to write your scraper.

Happy scraping.

Planning Alerts: first fruits

Posted: March 23, 2012 Filed under: api, hyperlocal, local government, mapping, open data, openlylocal, planning | Tags: Councils, gov2.0, hyperlocal, local data, Local Democracy, local government, opendata, Planning Applications, PlanningAlerts 13 CommentsWell, that took a little longer than planned…

[I won’t go into the details, but suffice to say our internal deadline got squeezed between the combination of a fast-growing website, the usual issues of large datasets, and that tricky business of finding and managing coders who can program in Ruby, get data, and be really good at scraping tricky websites.]

But I’m pleased to say we’ve now well on our way to not just resurrecting PlanningAlerts in a sustainable, scalable way but a whole lot more too.

Where we’re heading: a open database of UK planning applications

First, let’s talk about the end goal. From the beginning, while we wanted to get PlanningAlerts working again – the simplicity of being able to put in your postcode and email address and get alerts about nearby planning applications is both useful and compelling – we also knew that if the service was going to be sustainable, and serve the needs of the wider community we’d need to do a whole lot more.

Particularly with the significant changes in the planning laws and regulations that are being brought in over the next few years, it’s important that everybody – individuals, community groups, NGOs, other websites, even councils – have good and open access to not just the planning applications in their area, but in the surrounding areas too.

In short, we wanted to create the UK’s first open database of planning applications, free for reuse by all.

That meant not just finding when there was a planning application, and where (though that’s really useful), but also capturing all the other data too, and also keep that information updated as the planning application went through the various stages (the original PlanningAlerts just scraped the information once, when it was found on the website, and even then pretty much just got the address and the description).

Of course, were local authorities to publish the information as open data, for example through an API, this would be easy. As it is, with a couple of exceptions, it means an awful lot of scraping, and some pretty clever scraping too, not to mention upgrading the servers and making OpenlyLocal more scalable.

Where we’ve got to

Still, we’ve pretty much overcome these issues and now have hundreds of scrapers working, pulling the information into OpenlyLocal from well over a hundred councils, and now have well over half a million planning applications in there.

There are still some things to be sorted out – some of the council websites seem to shut down for a few hours overnight, meaning they appear to be broken when we visit them, others change URLs without redirecting to the new ones, and still others are just, well, flaky. But we’ve now got to a stage where we can start opening up the data we have, for people to play around with, find issues with, and start to use.

For a start, each planning application has its own permanent URL, and the information is also available as JSON or XML:

There’s also a page for each council, showing the latest planning applications, and the information here is available via the API too:

There’s also a GeoRSS feed for each council too allowing you to keep up to date with the latest planning applications for your council. It also means you can easily create maps or widgets for the council, showing the latest applications of the council.

Finally, Andrew Speakman, who’d coincidentally been doing some great stuff in this area, has joined the team as Planning editor, to help coordinate efforts and liaise with the community (more on this below).

What’s next

The next main task is to reinstate the original PlanningAlert functionality. That’s our focus now, and we’re about halfway there (and aiming to get the first alerts going out in the next 2-3 weeks).

We’ve also got several more councils and planning application systems to add, and this should bring the number of councils we’ve got on the system to between 150 and 200. This will be an ongoing process, over the next couple of months. There’ll also be some much-overdue design work on OpenlyLocal so that the increased amount of information on there is presented to the user in a more intuitive way – please feel free to contact us if you’re a UX person/designer and want to help out.

We also need to improve the database backend. We’ve been using MySQL exclusively since the start, but MySQL isn’t great at spatial (i.e. geographic) searches, restricting the sort of functionality we can offer. We expect to sort this in a month or so, probably moving to PostGIS, and after that we can start to add more features, finer grained searches, and start to look at making the whole thing sustainable by offering premium services.

We’ll be working too on liaising with councils who want to offer their applications via an API – as the ever pioneering Lichfield council already does – or a nightly data dump. This not only does the right thing in opening up data for all to use, but also means we don’t have to scrape their websites. Lichfield, for example, uses the Idox system, and the web interface for this (which is what you see when you look at a planning application on Lichfield’s website) spreads the application details over 8 different web pages, but the API makes this available on a single URL, reducing the work the server has to do.

Finally, we’re going to be announcing a bounty scheme for the scraper/developer community to write scrapers for those areas that don’t use one of the standard systems. Andrew will be coordinating this, and will be blogging about this sometime in the next week or so (and you can contact him at planning at openlylocal dot com). We’ll also be tweeting progress at @planningalert.

Thanks for your patience.

PlanningAlerts is dead, long-live PlanningAlerts

Posted: October 10, 2011 Filed under: hyperlocal, local government, mapping, open data, openlylocal | Tags: development, hyperlocal, local, local data, Local Democracy, local government, Open Government, planning, Planning Applications, PlanningAlerts 26 CommentsOne of the first and best examples of how data could make a difference to ordinary people’s lives was the inspirational PlanningAlerts.com, built by Richard Pope, Mikel Maron, Sam Smith, Duncan Parkes, Tom Hughes and Andy Armstrong.

In doing one simple thing – allowing ordinary people to subscribe to an email alert when there was a planning application near them, regardless of council boundaries – it showed that data mattered, and more than that had the power to improve the interaction between government and the community.

It did so many revolutionary things and fought so many important battles that everyone in the open data world (and not just the UK) owes all those who built it a massive debt of gratitude. Richard Pope and Duncan Parkes in particular put masses of hours writing scrapers, fighting the battle to open postcodes and providing a simple but powerful user experience.

However, over the past year it had become increasingly difficult to keep the site going, with many of the scrapers falling into disrepair (aka scraper rot). Add to that the demands of a day job, and the cost of running a server, and it’s a tribute to both Richard and Duncan that they kept PlanningAlerts going for as long as they did.

So when Richard reached out to OpenlyLocal and asked if we were interested in taking over PlanningAlerts we were both flattered and delighted. Flattered and delighted, but also a little nervous. Could we take this on in a sustainable manner, and do as good a job as they had done?

Well after going through the figures, and looking at how we might architect it, we decided we could – there were parts of the problem that were similar to what we were already doing with OpenlyLocal – but we’d need to make sustainability a core goal right from the get-go. That would mean a business plan, and also a way for the community to help out.

Both of those had been given thought by both us and by Richard, and we’d come to pretty much identical ideas, using a freemium model to generate income, and ScraperWiki to allow the community help with writing scrapers, especially for those councils didn’t use one of the common systems. But we also knew that we’d need to accelerate this process using a bounty model, such as the one that’s been so successful for OpenCorporates.

Now all we needed was the finance to kick-start the whole thing, and we contacted Nesta to see if they were interested in providing seed funding by way of a grant. I’ve been quite critical of Nesta’s processes in the past, but to their credit they didn’t hold this against us, and more than that showed they were capable and eager to working in a fast, lightweight & agile way.

We didn’t quite manage to get the funding or do the transition before Richard’s server rental ran out, but we did save all the existing data, and are now hard at work building PlanningAlerts into OpenlyLocal, and gratifyingly making good progress. The PlanningAlerts.com domain is also in the middle of being transferred, and this should be completed in the next day or so.

We expect to start displaying the original scraped planning applications over the next few weeks, and have already started work on scrapers for the main systems used by councils. We’ll post here, and on the OpenlyLocal and PlanningAlert twitter accounts as we progress.

We’re also liaising with PlanningAlerts Australia, who were originally inspired by PlanningAlerts UK, but have since considerably raised the bar. In particular we’ll be aiming to share a common data structure with them, making it easy to build applications based on planning applications from either source.

And, finally, of course, all the data will be available as open data, using the same Open Database Licence as the rest of OpenlyLocal.

A first look at the council spending data: £10bn, 1.5m payments, 60,000 companies

Posted: February 23, 2011 Filed under: finance, local government, open data, openlylocal, statistics 4 CommentsLike buses, you wait ages for local councils to publish their spending data, then a whole load come at once… and consequently OpenlyLocal has been importing the data pretty much non-stop for the past month or so.

We’ve now imported spending data for over 140 councils with more being added each day, and now have over a million and a half payments to suppliers, totalling over £10 billion. I think it’s worth repeating that figure: Ten Billion Pounds, as it’s a decent chunk of change, by anybody’s measure (although it’s still only a fraction of all spending by councils in the country).

Along with that we’ve also made loads of improvements to the analysis and data, some visible, other not so much (we’ve made loads of much-needed back-end improvements now that we’ve got so much more data), and to mark breaking the £10bn figure I thought it was worth starting a series of posts looking at the spending dataset.

Let’s start by having a look at those headline figures (we’ll be delving deeper into the data for some more heavyweight data-driven journalism over the next few weeks):

144 councils. That’s about 40% of the 354 councils in England (including the GLA). Some of the others we just haven’t yet imported (we’re adding them at about 2 a day); others have problems with the CSV files they are publishing (corrupted or invalid files, or where there’s some query about the data itself), and where there’s a contact email we’ve notified them of this.

The rest are refusing to publish the CSV files specified in the guidelines, deciding to make it difficult to automatically import by publishing an Excel file or, worse, a PDF (and here I’d like to single out Birmingham council, the biggest in the UK, which shamefully is publishing it’s spending only as a PDF, and even then with almost no detail at all. One wonders what they are hiding).

£10,184,169,404 in 1,512,691 transactions. That’s an average transaction value of £6,732 per payment. However this is not uniform across councils, varying from an average transaction value of £669 for Poole to £46,466 for Barnsley. (In future posts, I’ll perhaps have a look at using the R statistical language to do some histograms on the data, although I’d be more than happy if someone beat me to that).

194,128 suppliers. What does this mean? To be accurate, this is the total number of supplying relationships between the councils and the companies/people/things they are paying.

Sometimes a council may have (or appear to have) several supplier relationships with the same company (charity/council/police authority), using different names or supplier IDs. This is sometimes down to a mistake in keying in the data, or for internal reasons, but either way it means several supplier records are created. It’s also worth noting that redacted payments are often grouped together as a single ‘supplier’, as the council may not have given any identifier to show that a redacted payment of £50,000 to a company (and in general there’s little reason to redact such payments) is to a different recipient than a redacted payment of £800 to a foster parent, for example.

However, using some clever matching and with the help of the increasing number of users who are matching suppliers to companies/charities and other entities on OpenlyLocal (just click on ‘add info’ when you’re looking at a supplier you think you can match to a company or charity)., we’ve matched about 40% of these to real-world organisations such as companies and charities.

While that might not seem very high, a good proportion of the rest will be sole-traders, individuals, or organisations we’ve not yet got a complete list of (Parish and Town councils, for example). And what it does mean is we can start to get a first draft of who supplies local government. And this is what we’ve got:

66,165 companies, with total payments of £3,884,271,203 (£3.88 billion), 38.1% of the total £10bn, in 579,518 transactions, making an average payment of £6,702.

8,236 charities, with total payments of £415,878,177, 4.1% of the total, in 55,370 transactions, making an average payment of £7,511.

Next time, we’ll look at the company suppliers in a little more detail, and later on the charities too, but for the moment, as you can see we’re listing the top 20 matched indivudual companies and charities that supply local government. Bear in mind a company like Capita does business with councils through a variety of different companies, and there’s no public dataset of the relationships between the companies, but that’s another story.

Finally, the whole dataset is available to download as open data under the same share-alike attribution licence as the rest of OpenlyLocal, including the matches to companies/charities that are receiving the money (the link is at the bottom of the Council Spending Data Dashboard). Be warned, however, it’s a very big file (there’s a row for every transaction), and so is too big for Excel (or even Google Fusion tables for that matter), so it’s most use to those using a database, or doing academic research.

* Note: there are inevitably loads of caveats to this data, including that councils are (despite the guidance) publishing the data in different ways, including, occasionally, aggregating payments, and using over-aggressive redaction. It’s also, obviously, only 40% of the councils in England., although that’s a pretty big sample size. Finally there may be errors both in the data as published, and in the importing of it. Please do let us know at info@openlylocal.com if you see any errors, or figures that just look wrong.

Videoing council meetings redux: progress on two fronts

Posted: February 22, 2011 Filed under: hyperlocal, local government, openlylocal | Tags: Councils, data, hyperlocal, local, Local Democracy, local government, Open Government, youtube 19 CommentsTonight, hyperlocal bloggers (and in fact any ordinary members of the public) got two great boosts in their access to council meetings, and their ability to report on them.

Windsor & Maidenhead this evening passed a motion to allow members of the public to video the council meetings. This follows on from my abortive attempt late last year to video one of W&M’s council meeting – see the full story here, video embedded below – following on from the simple suggestion I’d made a couple of months ago to let citizens video council meetings. I should stress that that attempt had been pre-arranged with a cabinet member, in part to see how it would be received – not well as it turned out. But having pushed those boundaries, and with I dare say a bit of lobbying from the transparency minded members, Windsor & Maidenhead have made the decision to fully open up their council meetings.

Separately, though perhaps not entirely coincidentally, the Department for Communities & Local Government tonight issued a press release which called on councils across the country to fully open up their meetings to the public in general and hyperlocal bloggers in particular.

Councils should open up their public meetings to local news ‘bloggers’ and routinely allow online filming of public discussions as part of increasing their transparency, Local Government Secretary Eric Pickles said today.

To ensure all parts of the modern-day media are able to scrutinise Local Government, Mr Pickles believes councils should also open up public meetings to the ‘citizen journalist’ as well as the mainstream media, especially as important budget decisions are being made.

Local Government Minister Bob Neill has written to all councils urging greater openness and calling on them to adopt a modern day approach so that credible community or ‘hyper-local’ bloggers and online broadcasters get the same routine access to council meetings as the traditional accredited media have.

The letter sent today reminds councils that local authority meetings are already open to the general public, which raises concerns about why in some cases bloggers and press have been barred.

Importantly, the letter also tells councils that giving greater access will not contradict data protection law requirements, which was the reason I was given for W&M prohibiting me filming.

So, hyperlocal bloggers, tweet, photograph and video away. Do it quietly, do it well, and raise merry hell in your blogs and local press if you’re prohibited, and maybe we can start another scoreboard to measure the progress. To those councils who videocast, make sure that the videos are downloadable under the Open Government Licence, and we’ll avoid the ridiculousness of councillors being disciplined for increasing access to the democratic process.

And finally if we can collectively think of a way of tagging the videos on Youtube or Vimeo with the council and meeting details, we could even automatically show them on the relevant meeting page on OpenlyLocal.

Videoing council meetings revisited: the limits of openness in a transparent council

Posted: December 8, 2010 Filed under: hyperlocal, local government, openlylocal, politics | Tags: Councils, gov2.0, hyperlocal, Local Democracy, Open Government, transparency, videocasting, youtube 11 CommentsA couple of months ago, I blogged about the ridiculous situation of a local councillor being hauled up in front of the council’s standards committee for posting a council webcast onto YouTube, and worse, being found against (note: this has since been overturned by the First Tier Tribunal for Local Government Standards, but not without considerable cost for the people of Brighton).

At the time I said we should make the following demand:

Give the public the right to record any council meeting using any device using Flip cams, tape recorders, frankly any darned thing they like as long as it doesn’t disrupt the meeting.

Step forward councillor Liam Maxwell from the Royal Borough of Windsor & Maidenhead, who as the cabinet member for transparency has a personal mission to make RBWM the most transparent council in the country. I don’t see why you couldn’t do that our council, he said.

So, last night, I headed over to Maidenhead for the scheduled council meeting to test this out, and either provide a shining example for other councils, or show that even the most ‘transparent’ council can’t shed the pomposity and self-importance that characterises many council meetings, and allow proper open access.

The video below, less than two minutes long, is the result, and as you can see, they chose the latter course:

Interestingly, when asked why videoing was not allowed, they claimed ‘Data Protection’, the catch-all excuse for any public body that doesn’t want to publish, or open up, something. Of course, this is nonsense in the context of a public meeting, and where all those being filmed were public figures who were carrying out a civic responsibility.

There’s also an interesting bit to the end when a councillor answered that they were ‘transparent’ in response to the observation that they were supposed to be open. This is the same old you-can-look-but-don’t touch attitude that has characterised much of government’s interactions with the public (and works so well at excluding people from the process). Perhaps naively, I was a little shocked to hear this from this particular council.

So there you have it. That, I guess, is where the boundaries of transparency lies at Windsor & Maidenhead. Why not test them out at your council, and perhaps we can start a new scoreboard at OpenlyLocal to go with the open data scoreboard, and the 10:10 council scoreboard

Not the way to build a Big Society: part1 NESTA

Posted: November 17, 2010 Filed under: finance, local government, open data, openlylocal | Tags: data, finance, gov2.0, local data, local government, nesta, spending 9 CommentsI took a very frustrating phone call earlier today from NESTA, an organisation I’ve not had any dealings with it before, and don’t actually have a view about it, or at least didn’t.

It followed from an email I’d received a couple of days earlier, which read:

I am contacting you about a project NESTA are currently working on in partnership with the Big Society Network called Your Local Budget.

Working with 10 pioneer local authorities, we are looking at how you can use participatory budgeting to develop new ways to give people a say in how mainstream local budgets are spent. Alongside this we will also be developing an online platform that enables members of the public to understand and scrutinise their local authority’s spending, and connect with each other to generate ideas for delivering better value for money in public spending.

We would like to share our thinking and get your thoughts on the online tool to get a sense of what is needed and where we can add value. You are invited to a round table discussion on Friday 19 November, 11am – 12.30pm at NESTA that will be chaired by Philip Colligan, Executive Director of the Public Services Lab. Following the meeting we intend to issue an invitation to tender for the online tool.

Apart from the short notice & terrible timing (it clashes with the Open Government Data Camp, to which you’d hope most of the people involved would be going), the main question I had was this:

Why?

I got the phone call because I couldn’t make the round table, and for some feedback, and this was the feedback I gave: I don’t understand why this is being done. At all.

Putting aside the participatory budgeting part (although this problem seems to be getting dealt with by Redbridge council and YouGov, whose solution is apparently being offered to all councils), there’s the question of the “online platform that enables members of the public to understand and scrutinise their local authority’s spending, and connect with each other to generate ideas for delivering better value for money in public spending.”

Excuse me? Most of the data hasn’t been published yet, there are several known organisations and groups (including OpenlyLocal) that have publicly stated they going to to be importing this data and doing things with it – visualising it, and allowing different views and analysis. Additionally, OpenlyLocal is already talking with several newspaper groups to help them re-use the data, and we are constantly evolving how we match and present the data.

Despite this, Nesta seems to have decided that it’s going to spend public money on coming up with a tendered solution to solve a problem that may be solved for zero cost by the private sector. Now I’m no roll-back-the-government red-in-tooth-and-claw free marketeer, but this is crazy, and I said as much to the person from Nesta.

Is the roundtable to decide whether the project should be done, or what should be done? I asked. The latter I was told. So, they’ve got some money and have decided they’re going to spend it, even though the need may not be there. At a time when welfare payments are being cut, essential services are being slashed, for this sort of thing to happen is frankly outrageous.

There are other concerns here too – I personally think websites such as this are not suitable for a tender process, as that doesn’t encourage or often even allow the sort of agile, feedback-led process that produces the best websites. They also favour those who make their living by tendering.

So, Nesta, here’s a suggestion. Park this idea for 12 months, and in the meantime give the money back to the government. If you want to act as an angel funding then act as such (and the ones I’ve come across don’t do tendering). A reminder, your slogan is ‘making innovation flourish’, but sometimes that means stepping back and seeing what happens. This is not the way to building a Big Society

Open data, fraud… and some worrying advice

Posted: October 26, 2010 Filed under: finance, local government, open data, openlylocal | Tags: Councils, data, finance, fraud, ICO, LGA, local data, local government, opendata, spending 6 CommentsOne of the most commonly quoted concerns about publishing public data on the web is the potential for fraud – and certainly the internet has opened up all sorts of new routes to fraud, from Nigerian email scams, to phishing for bank accounts logins, to key-loggers to indentity theft.

Many of these work using two factors – the acceptance of things at face value (if it looks like an email from your bank, it is an email from them), and flawed processes designed to stop fraud but which inconvenience real users while making life easy from criminals.

I mention this because of some pending advice from the Local Government Association to councils regarding the publication of spending data, which strikes me as not just flawed, but highly dangerous and an invitation to fraudsters.

The issue surrounds something that may seem almost trivial, but bear with me – it’s important, and it’s off such trivialities that fraudsters profit.

In the original guidance for councils on publishing spending data we said that councils should publish both their internal supplier IDs and the supplier VAT numbers, as it would greatly aid the matching of supplier names to real-world companies, charities and other organisations, which is crucial in understanding where a local council’s money goes.

When the Local Government Association published its Guidance For Practitioners it removed those recommendations in order to prevent fraud. It has also suggested using the internal supplier ID as a unique key to confirm supplier identity. This betrays a startling lack of understanding, and worse opens up a serious vector to allow criminals to defraud councils of large sums of money.

Let’s take the VAT numbers first. The main issue here appears to be so-called missing trader fraud, whereby VAT is fraudulently claimed back from governments. Now it’s not clear to me that by publishing VAT numbers for supplier names that this fraud is made easier, and you would think the Treasury who recommend publishing the VAT numbers for suppliers in their guidance (PDF) would be alert to this (I’m told they did check with HMRC before issuing their guidance).

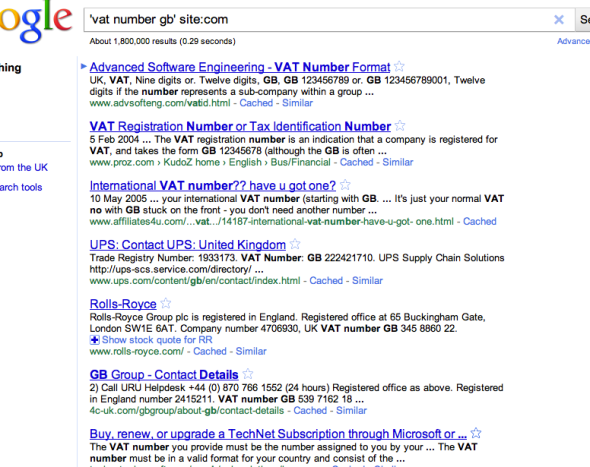

However, that’s not the point. If it’s about matching VAT numbers to supplier names there’s already several routes for doing this, with the ability to retrieve tens of thousands of them in the space of an hour or so, including this one:

http://www.google.co.uk/#sclient=psy&hl=en&q=%27vat+number+gb%27+site:com

Click on that link and you’ll get something like this:

Whether you’re a programmer or not, you should be able to see that it’s a trivial matter to go through those thousands of results and extract the company name and VAT number, and bingo, you’ve got that which the LGA is so keen for you not to have. So those who are wanting to match council suppliers don’t get the help a VAT number would give, and fraudsters aren’t disadvantaged at all.

Now, let’s turn to the rather more serious issue of internal Supplier IDs. Let me make it clear here, when matching council or central government suppliers, internal Supplier IDs are useful, make the job easier, and the matching more accurate, and also help with understanding how much in total redacted payees are receiving (you’d be concerned if a redacted person/company received £100,000 over the course of a year, and without some form of supplier ID you won’t know that). However, it’s not some life-or-death battle over principle for me.

The reason the LGA, however, is advising councils not to publish them is much more serious, and dangerous. In short, they are proposing to use the internal Supplier ID as a key to confirm the suppliers identity, and so allow the supplier to change details, including the supplier bank account (the case brought up here to justify this was the recent one of South Lanarkshire, which didn’t involve any information published as open data, just plain old fraudster ingenuity).

Just think about that for a moment, and then imagine that it’s the internal ID number they use for you in connection with paying your housing benefits. If you want to change your details, say you wanted to pay the money into a different bank account, you’d have to quote it – and just how many of us would have somewhere both safe to keep it and easy to find (and what about when you separated from your partner).

Similarly, where and how do we really think suppliers are going to keep this ID (stuck on a post-it note to the accounts receivable’s computer screen?), and what happens when they lose it? How do they identify themselves to find out what it is, and how will a council go about issuing a new one should the old one be compromised – is there any way of doing this except by setting up a new supplier record, with all the problems that brings.

And how easy would it be to do a day or two’s temping in a council’s accounts department and do a dump/printout of all the Supplier IDs, and then pass them onto fraudsters. The possibilities – for criminals – are almost limitless, and the Information Commissioner’s Office should put a stop to this at once if it is not to lose a serious amount of credibility.

But there’s an bigger underlying issue here, and it’s not that organisations such as the LGA don’t get data (although that is a problem), it’s that such bodies think that by introducing processes they can engineer out all risk, and that leads to bad decisions. Tell someone that suppliers changing bank accounts is very rare and should always be treated with suspicion and fraud becomes more difficult; tell someone that they should accept internal supplier IDs as proof of identity and it becomes easy.

Government/big-company bureaucrats not only think like government/big-company bureaucrats, they build processes that assumes everyone else does. The problem is that that both makes more difficult for ordinary citizens (as most encounters with bureaucracy make clear), and also makes it easy for criminals (who by definition don’t follow the rules).